ChatGPT experiment - analyzing a team's oncall impact

As you've likely seen, ChatGPT is getting a lot of attention lately. I wanted to experiment with it and see what I could learn, so this past weekend I tried out integrating ChatGPT into one of my apps. What was it like to work with and what could I potentially get from it? This is a recounting of my experiment.

A while back I built a frontend app to process and analyze a team's alerts from PagerDuty that I called OnCalm. (Have a calm on-call rotation with OnCalm - I'm a big fan of puns.) I picked OnCalm as my experiment playground for ChatGPT.

Initial experiment with ChatGPT UI

To experiment quickly with what analysis I could get from ChatGPT, I started with the ChatGPT web UI. I started with this prompt to see what ChatGPT would tell me:

"I got 2 nighttime pages last week and 5 daytime pages, how bad is my on-call load?"

With just that prompt, the ChatGPT UI responded with a fairly in-depth answer:

"It's difficult to say whether your on-call load is bad or not based solely on the number of pages you received. The severity and urgency of the issues that generated those pages are also important factors to consider.

However, as a general rule of thumb, receiving 2 nighttime pages and 5 daytime pages in a week may be considered a moderately heavy on-call load, particularly if the issues were urgent and required a significant amount of time to resolve. It's important to keep track of your on-call workload and communicate with your team or manager if you feel that it is becoming too much to handle."

This looked promising! Now I felt confident in starting the work to integrate the ChatGPT API into OnCalm.

Coaching ChatGPT

Again starting light, I used the ChatGPT API docs to create a call to the chat completion API using Postman.

The ChatGPT v4 had a waitlist, so I started with the ChatGPT 3.5 turbo API. My initial prompt to the API:

1{

2 "model": "gpt-3.5-turbo",

3 "messages": [{"role": "user", "content": "I got 2 nighttime pages last week and 5 daytime pages, how bad is my on-call load?"}]

4}

And the resulting response from ChatGPT 3.5:

"As an AI language model, I do not have the ability to determine whether your on-call load is bad or not as it may depend on various factors such as your job description, your capacity, and your company's expectations. However, you can evaluate your on-call load based on your personal experience and the impact it has on your work-life balance."

The response from the API with ChatGPT 3.5 wasn't nearly as interesting to me as the one the v4 UI returned - bummer!

While I wait for the v4 API to be more publicly available, I tried experimenting with giving ChatGPT more context in my prompt. I tried telling it an interpretation of a disruptive on-call load:

"More than 1 nighttime page per week is very disruptive to the on-call engineer. And more than 3 daytime pages is fairly disruptive. While more than 5 daytime pages is quite disruptive. I got 2 nighttime pages last week and 5 daytime pages, how bad is my on-call load?"

With that bit of coaching, ChatGPT had no problem analyzing my on-call load:

"Your on-call load last week was fairly disruptive, as you received 2 nighttime pages (which is the threshold for disruption) and 5 daytime pages which exceed the 3-page threshold for disruption. It's important to find ways to reduce the number of pages to improve the work-life balance of on-call engineers."

It took a bit of additional context in my prompt, but now ChatGPT gave me an interesting result. Now it's time to integrate it into the OnCalm app!

Calling ChatGPT API from OnCalm

Like many APIs, ChatGPT using an API key for authorization. OnCalm was purely a frontend React app, and I didn't want to leak my ChatGPT API key to the browser. OnCalm is deployed on Netlify, so I created a Netlify edge function to encapsulate the call to the ChatGPT API - including using the API key and the prompt creation.

Creating an edge function on Netlify turned out to be fairly straightforward. First, I created a Javascript file in the default location /netlify/edge-functions/ in my app codebase. Later I converted the file to Typescript.

The existing OnCalm React app calculated how the number nighttime and daytime alerts, so I passed those down to the edge function to create the ChatGPT prompt.

I then wrote the code to encapsulate the prompt creation and call to ChatGPT. OpenAI has a Node package for the ChatGPT API, but Netlify edge functions use a Deno environment that has some limitations around using Node packages so I couldn't easily use the Node package. But I was just using one API call to ChatGPT, so I just wrote the fetch call by hand:

1const chatCompletion = async (daytimeCount: number, nighttimeCount: number): Promise<string> => {

2 const prompt = createPrompt(daytimeCount, nighttimeCount)

3

4 const apiKey = Deno.env.get("OPENAI_API_KEY");

5

6 const requestBody = {

7 model: "gpt-3.5-turbo",

8 messages: [{

9 role: "user", content: prompt

10 }]

11 }

12

13 const response = await fetch("https://api.openai.com/v1/chat/completions", {

14 method: "POST",

15 headers: {

16 "Content-Type": "application/json",

17 "Authorization": `Bearer ${apiKey}`

18 },

19 body: JSON.stringify(requestBody)

20 });

21

22 const responseJson = await response.json();

23

24 const choices = responseJson.choices;

25

26 if (choices.length > 0) {

27 return choices[0].message.content;

28 } else {

29 return ""

30 }

31}

And the function that creates the prompt text for ChatGPT:

1const createPrompt = (daytimeCount: number, nighttimeCount: number): string => {

2 return `More than 1 nighttime page per week is very disruptive to the on-call engineer. And more than 3 daytime pages is fairly disruptive. While more than 5 daytime pages is quite disruptive.

3

4 I got ${nighttimeCount} nighttime pages last week and ${daytimeCount} daytime pages, how bad is my on-call load?

5 `

6}

Finally, the resulting edge function that strings the calls together and returns the JSON response back to the React app:

1export default async (request: Request, context: Context) => {

2 const jsonBody = await request.json()

3

4 const daytimeCount = jsonBody.daytime;

5 const nighttimeCount = jsonBody.nighttime;

6

7 const chatGptResponse = await chatCompletion(daytimeCount, nighttimeCount)

8

9 const response = { message: chatGptResponse}

10

11 return new Response(JSON.stringify(response));

12};

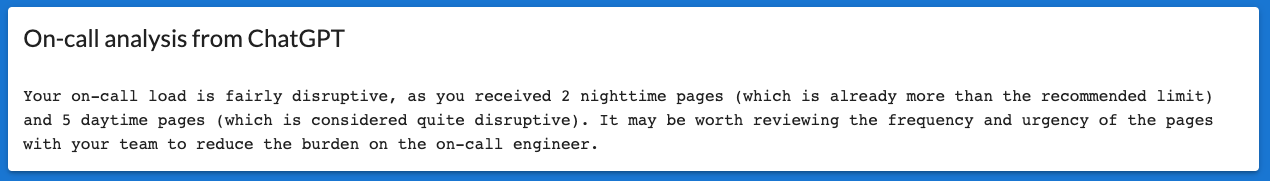

I then updated the React app to call this edge function and display the results in the OnCalm UI:

Conclusion

The ChatGPT API was smooth to interact with, and I enjoyed working with ChatGPT itself. My initial experiment was fairly limited, but I could see the value of working with ChatGPT - especially with how coachable it is.

If you want to try it out yourself with OnCalm - head over to the OnCalm site and try uploading your PagerDuty alert CSV file or using the PagerDuty API.